Contact us now!

Order a call

Order a call Get an offer

Get an offer

Table of Contents

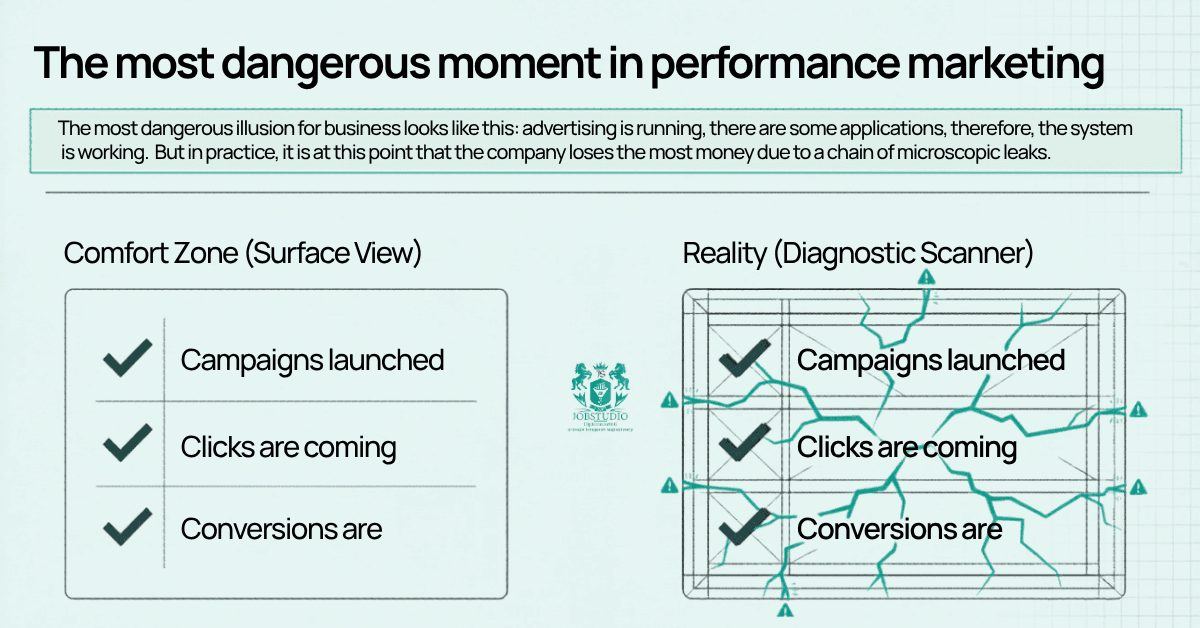

In paid traffic projects, the most dangerous misconception goes like this: the ads are already running, the campaigns are active, and there are some conversions—so the system must be working overall. In reality, this is precisely when businesses often lose the most money.

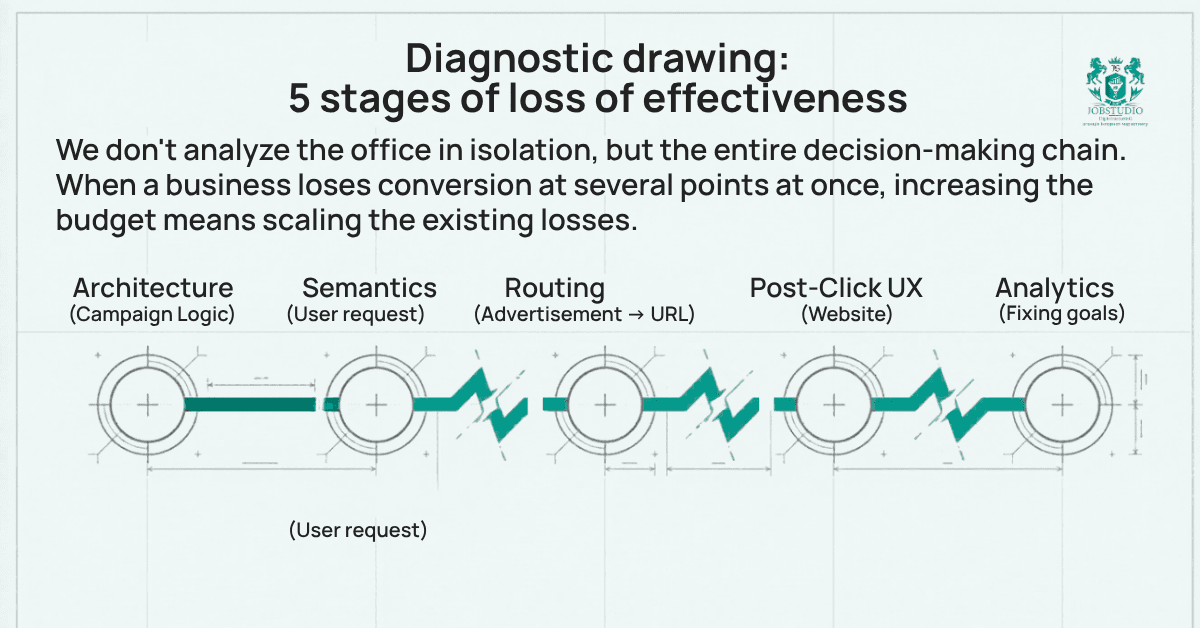

Not because of a single “fatal” mistake, but because of a series of minor and moderate oversights: in the account structure, in the semantics, in the “query → ad → page” flow, in goal tracking, and even on the website itself.

That’s exactly what we saw in this Google Ads audit.

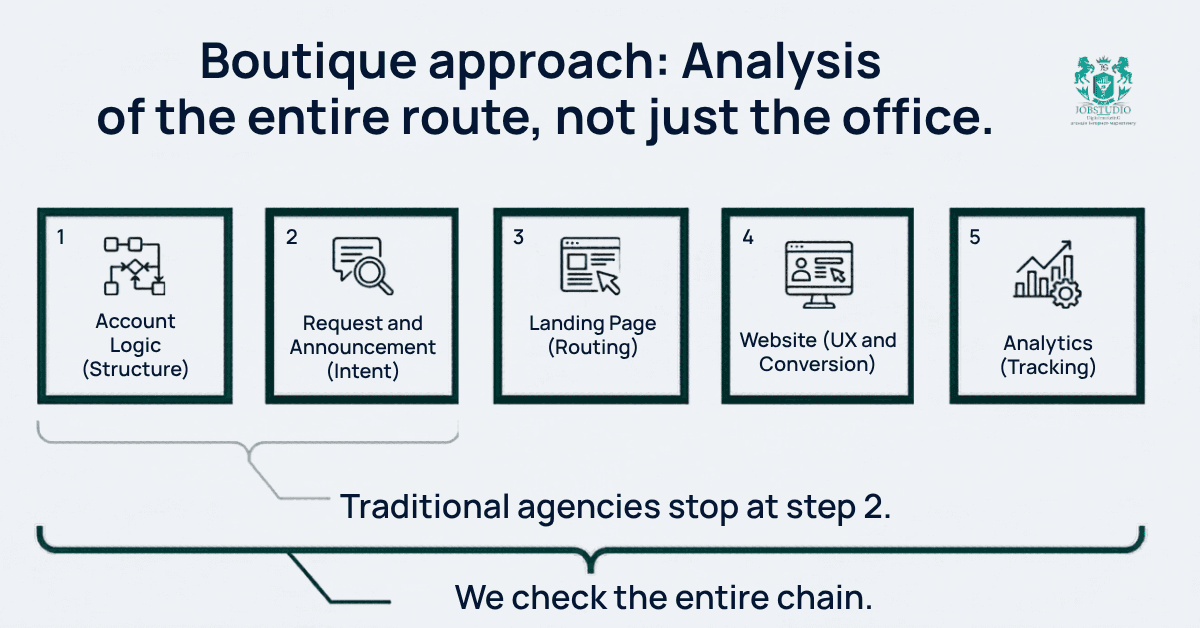

This is precisely the approach that sets a boutique performance agency apart from run-of-the-mill, “cookie-cutter” work. We don’t view the project in isolation. We analyze the entire user journey: what the user types into the search bar, which ads they see, where they click, whether they can perform an action on the site, and whether that action will be correctly tracked in analytics. When a business loses effectiveness at several points at once, increasing the budget without such diagnostics means amplifying existing losses.

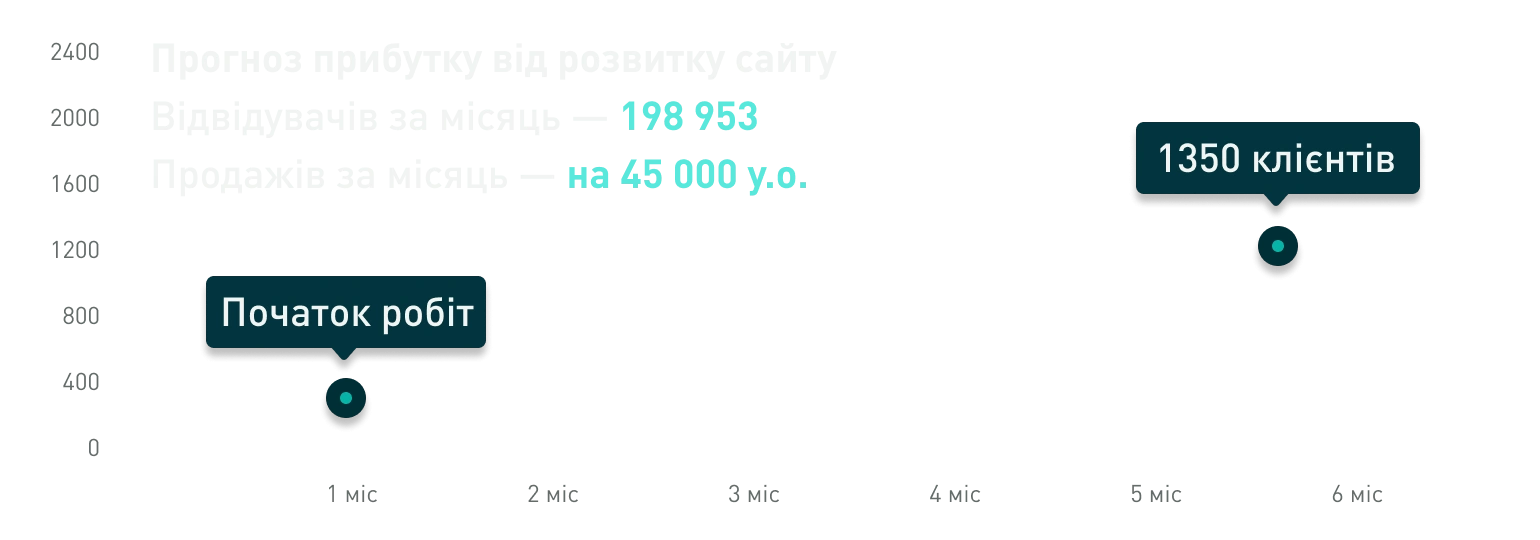

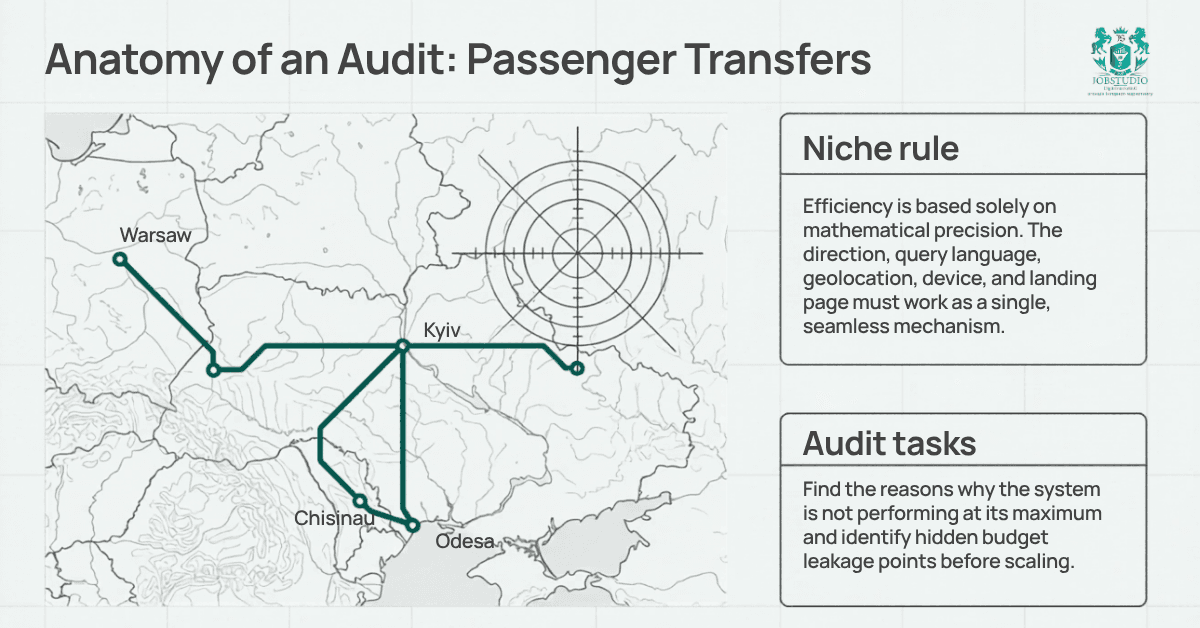

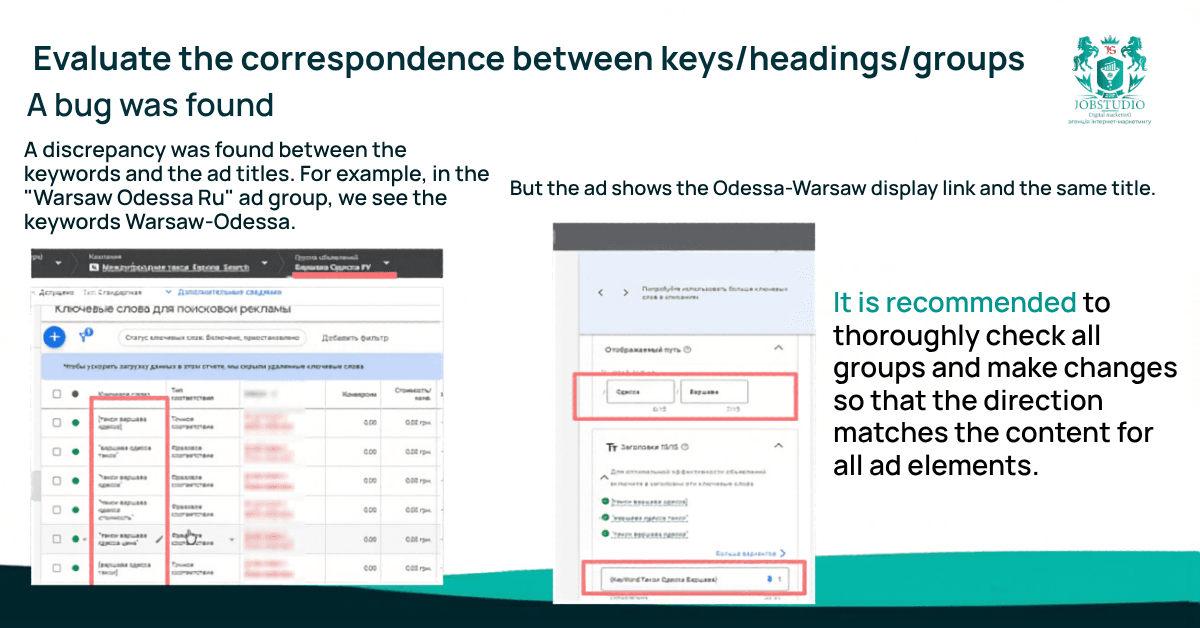

The project focused on passenger transportation and transfers, with advertising centered on intercity and international routes. This is a crucial detail because, in this niche, effectiveness depends on precision: the destination, the language of the query, the user’s location, the device, the landing page, and the call-to-action must all work together as a unified system. The audit already revealed that the account contained separate route groups such as “Kyiv–Warsaw,” “Warsaw–Kyiv,” “Chisinau–Odesa,” and “Odesa–Chisinau,” meaning the campaign logic had to be as precise and segmented as possible.

The goal of the audit wasn’t simply to “take a look at the ads,” but to identify the reasons why the current system wasn’t performing at its best. The business needed to understand exactly where efficiency was being lost: in the campaign structure, in ad quality, in keywords, in budget allocation, in conversion tracking, or post-click—at the website level. That is why the audit covered not just one component, but the entire chain: from the user’s query to the action on the page.

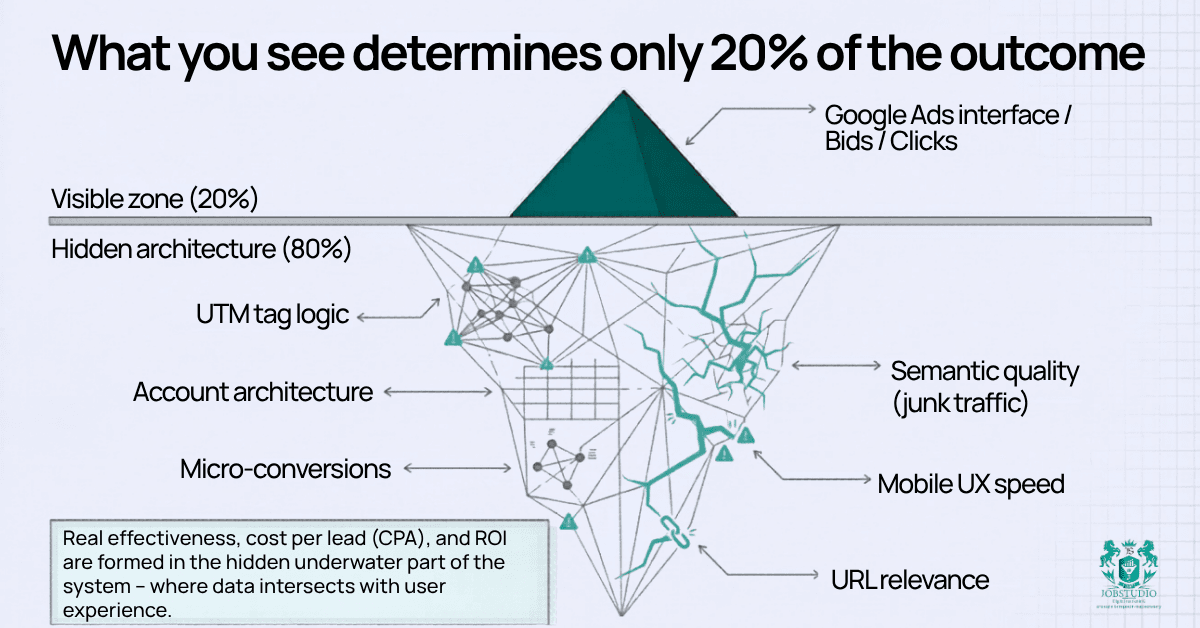

Why a single glance at the advertising agency wasn’t enough

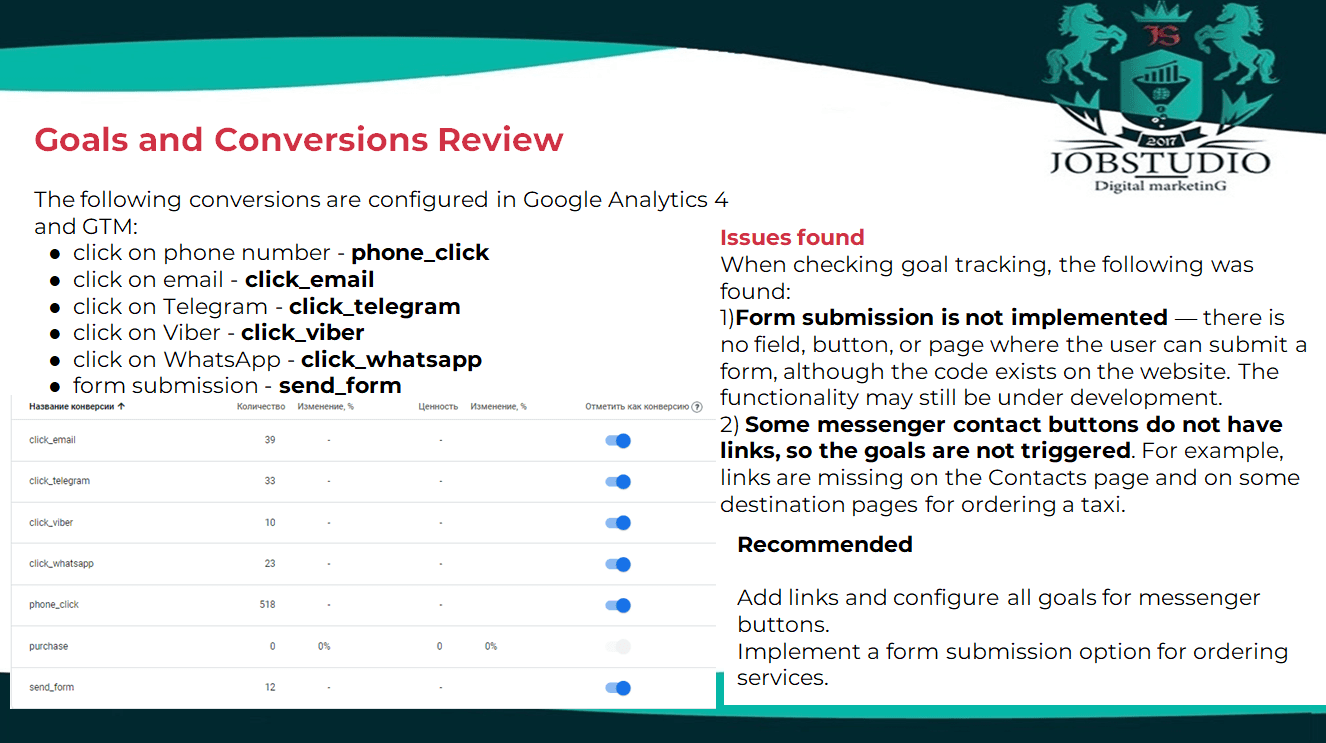

If you look only at the Google Ads interface, you can identify some of the issues. But not all of them. In this project, for example, conversions were set up in Google Analytics 4 and GTM for clicks on phone numbers, email addresses, Telegram, Viber, WhatsApp, and form submissions. At the same time, the audit revealed that the form itself had not actually been implemented, and some of the messenger buttons lacked links entirely—specifically on the contact page and certain landing pages. In other words, in theory, the system appeared to be set up, but in practice, some users simply could not complete the action.

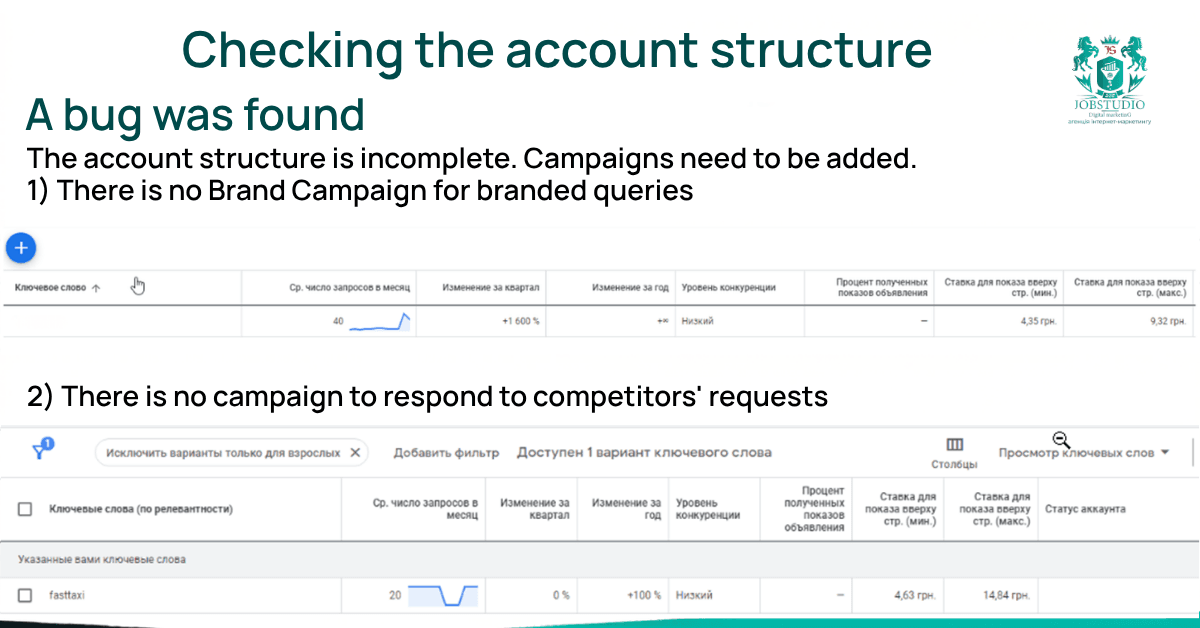

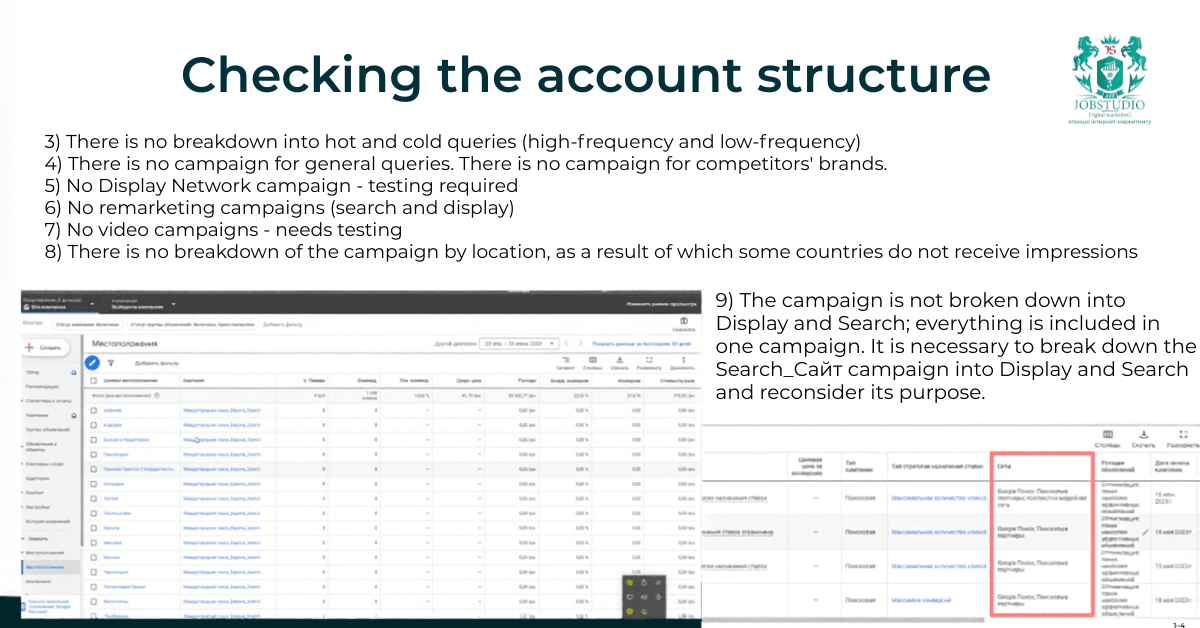

We started with the account structure. We checked to see if it included separate campaigns for brand-related queries, competitor queries, hot and cold clusters, general queries, display ads, remarketing, video, and geographic segmentation.

We also checked to see if different types of traffic—each requiring distinct management approaches—had been mixed within a single campaign. In performance advertising, this is fundamental: if the structure is poorly designed, any subsequent optimization becomes more expensive and harder to manage.

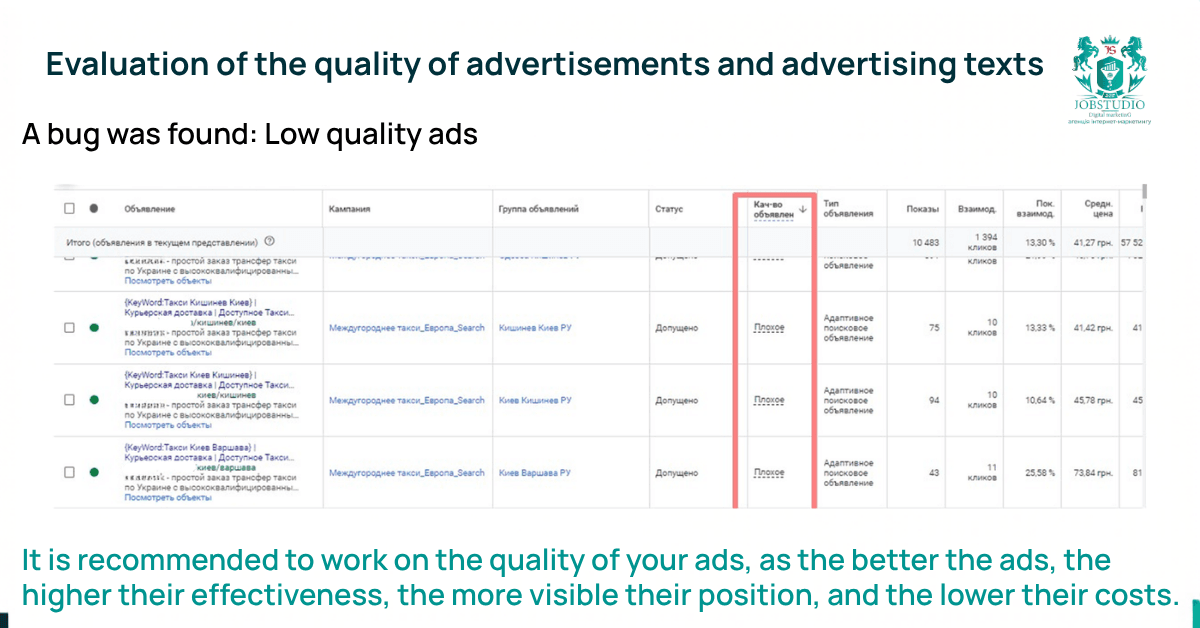

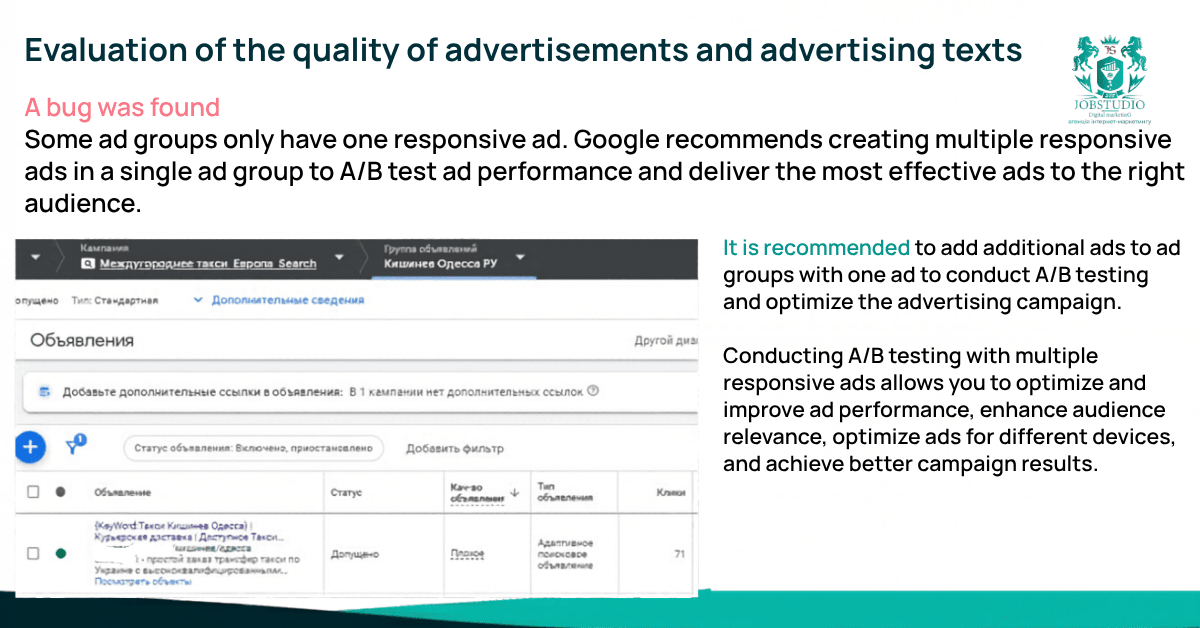

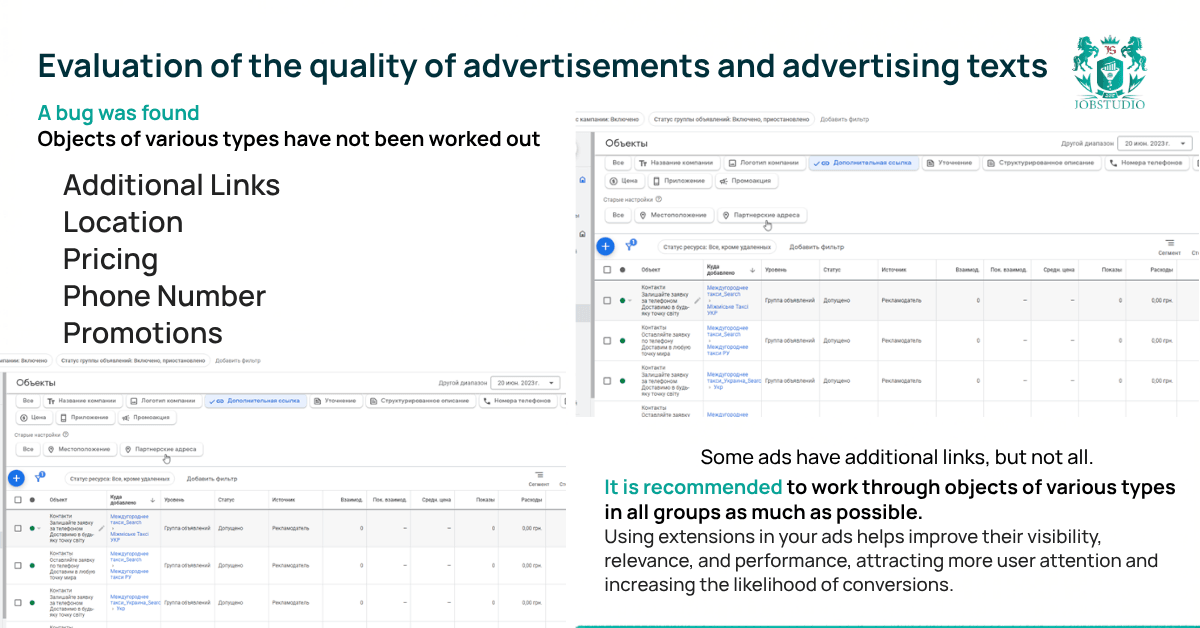

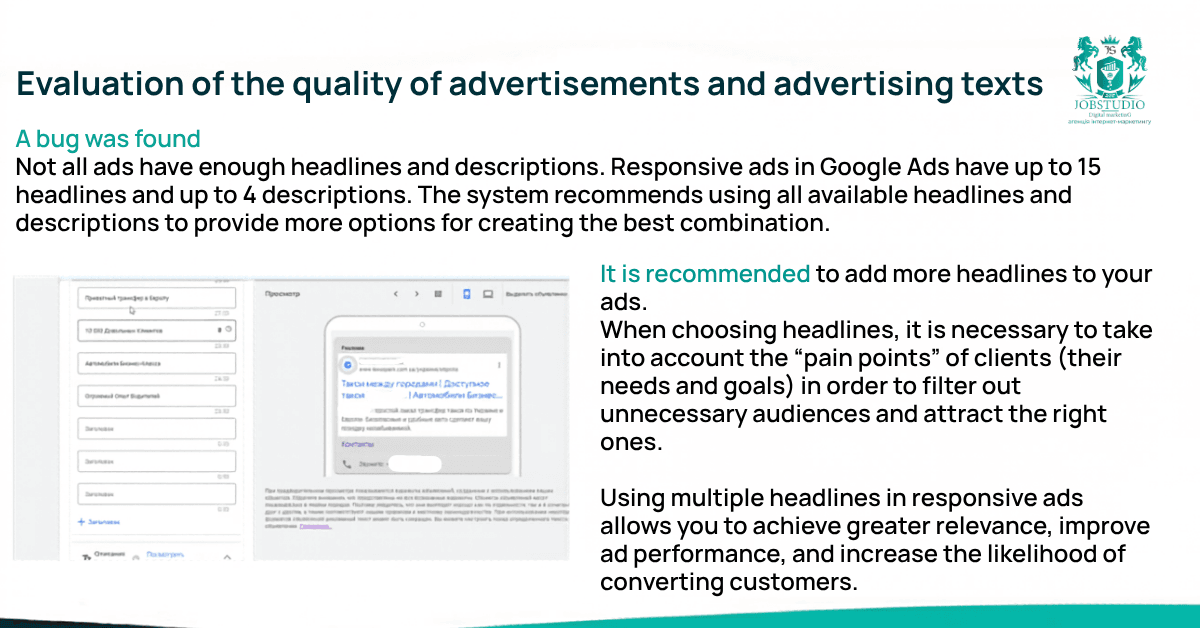

Next, we reviewed the ads themselves. We wanted to know if there were enough responsive ads in the ad groups, if there was room for A/B testing, if there were enough headlines and descriptions, if ad extensions were set up, and if all available extensions were fully utilized: additional links, location, prices, phone number, and promotions. In niches with high competition for commercial demand, a weak ad doesn’t always “kill” a campaign right away, but it does erode its potential bit by bit: through lower CTR, weaker relevance, and poorer control over the message.

Why is this important?

Reaching your target audience: Well-written and engaging ads help attract the attention of potential customers and drive them to your website or page

High click-through rate (CTR): High-quality ads typically have a higher click-through rate. The more users click on your ad, the more traffic you drive to your website or landing page. A high CTR also has a positive impact on ad rankings and helps lower the cost per click

Relevance and alignment: if an ad doesn’t match what the user was searching for, they’re unlikely to click on it. This leads to a low CTR and poor ad performance.

Improving the quality of your ad campaigns: Google Ads takes ad quality into account when determining ad rankings. High-quality ads can improve your ad’s position and relevance, helping you get more clicks and leads

Cost optimization: When ads are high-quality and relevant, they attract more high-quality traffic. This helps lower the cost per conversion and achieve a better ROI—the return on investment for an advertising campaign.

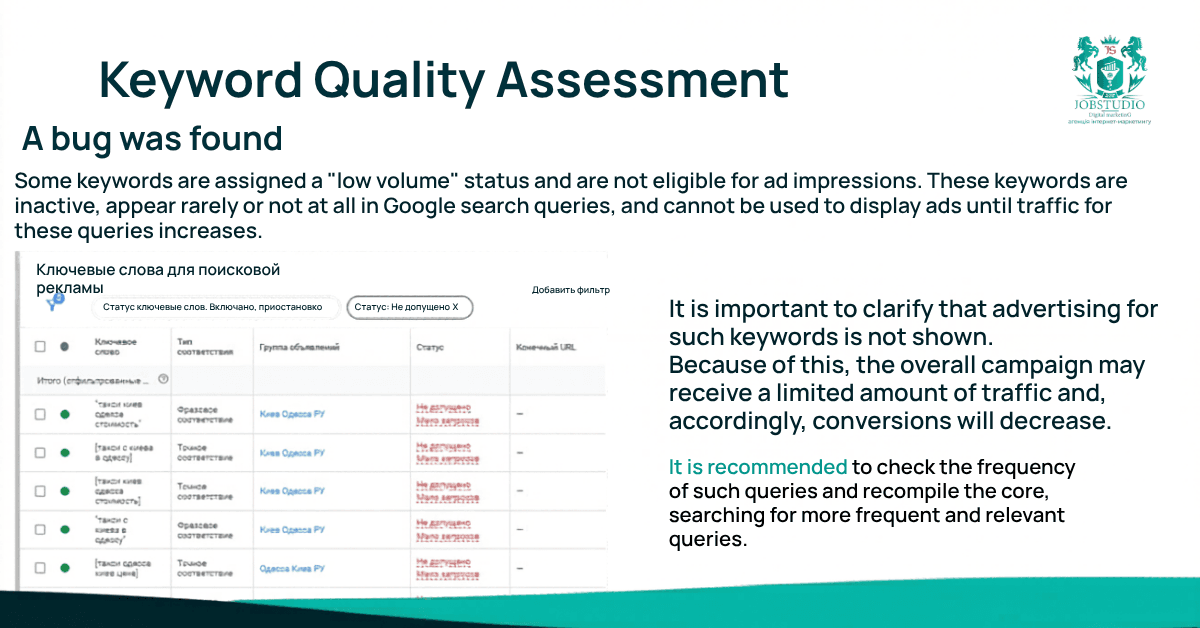

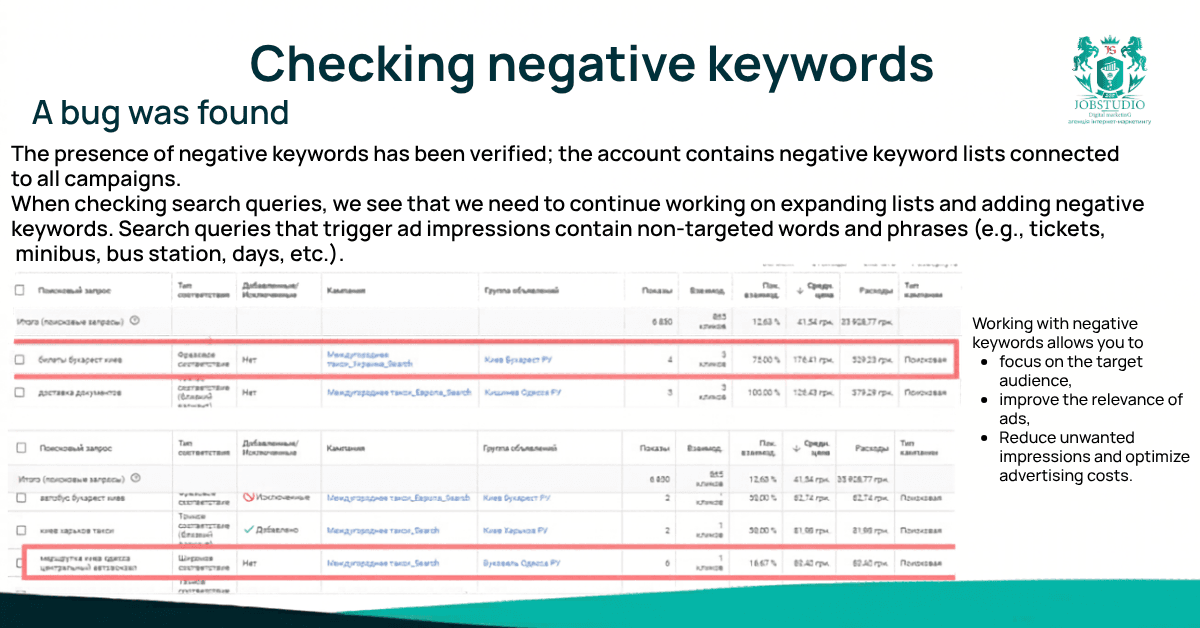

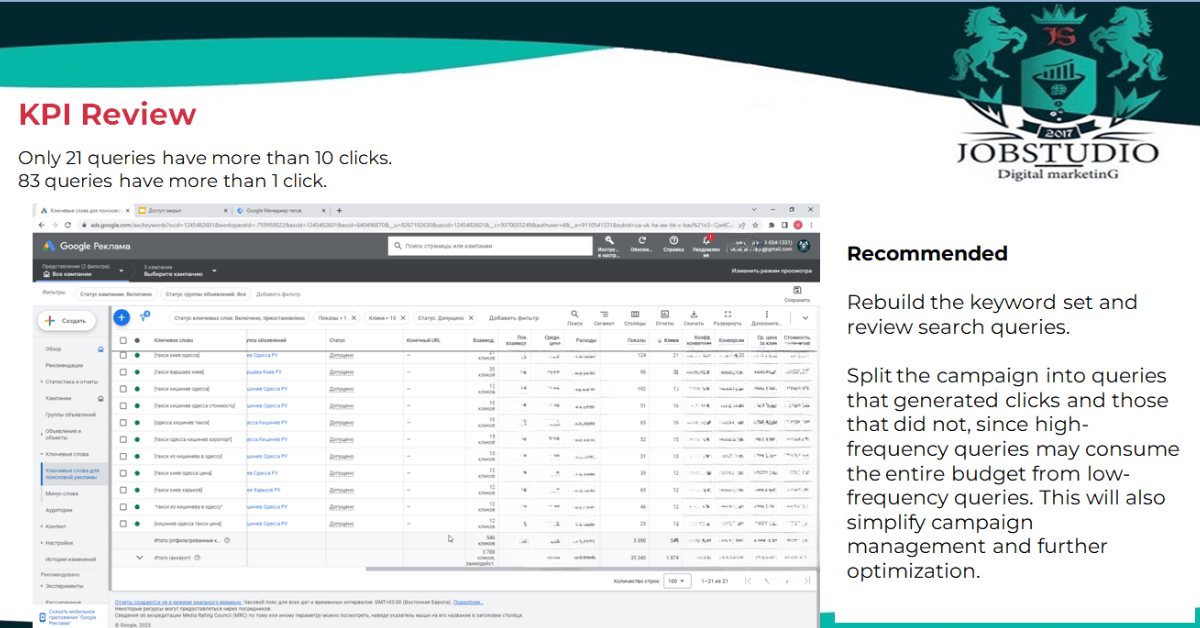

We also analyzed the semantics separately. We checked whether there were any keywords with a “low search volume” status, whether low-value queries were eating into the budget, whether negative keywords had been sufficiently refined, and how well the core keywords aligned with the audience’s actual search intent.

For topics such as transportation and transfers, this is critical: the difference between a high-priority commercial inquiry and informational or irrelevant noise is literally a matter of dollars and cents.

Analytics, UTM, and conversion tracking

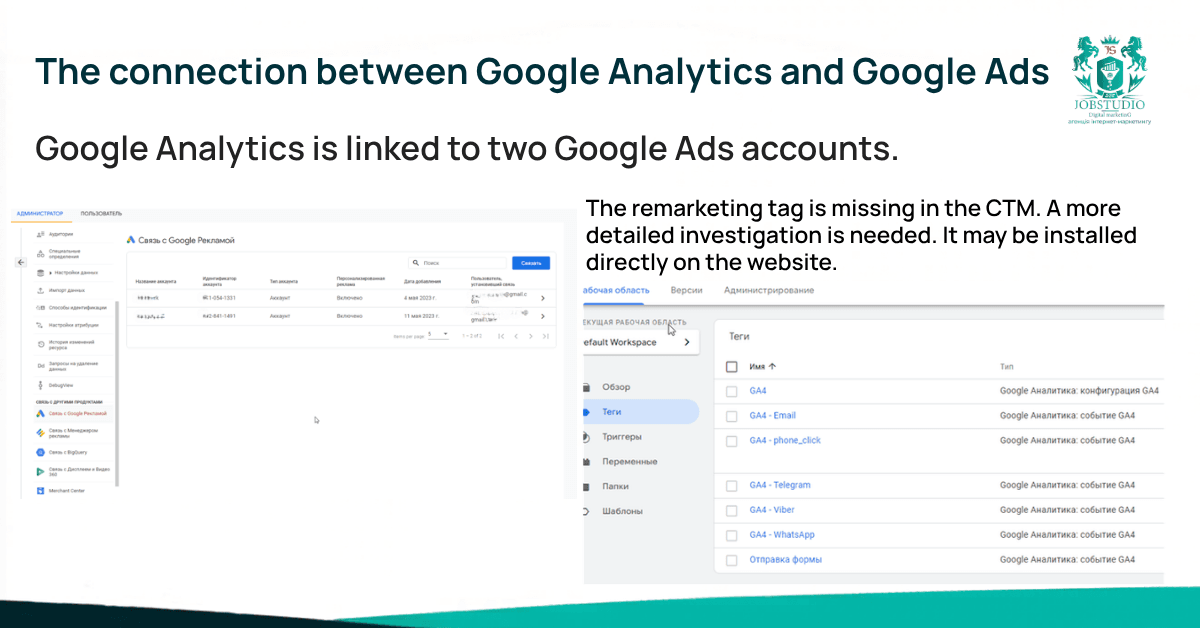

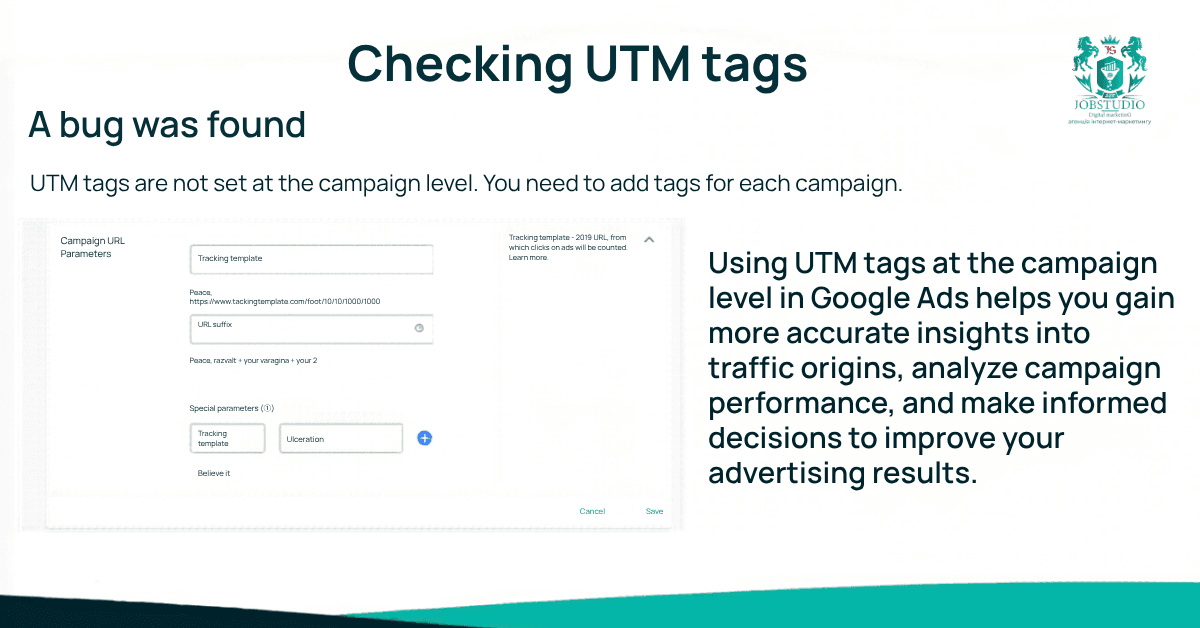

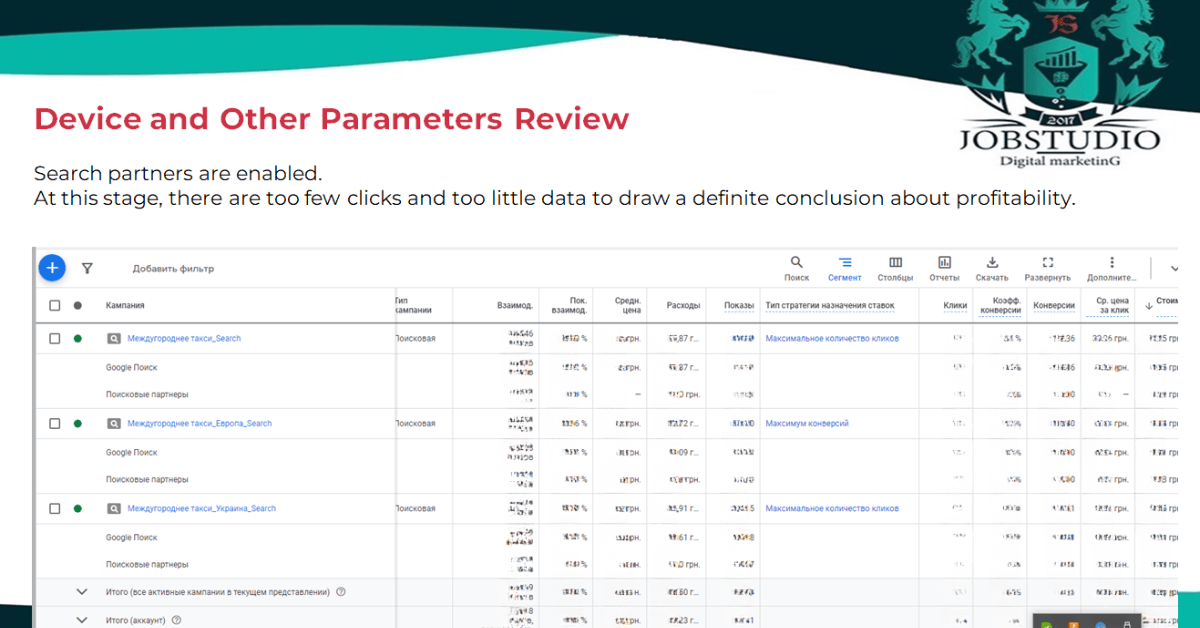

We checked the integration between Google Ads and Google Analytics, the presence and logic of UTM tags, the accuracy of goals in GTM, and the overall data quality. The audit revealed that Google Analytics was linked to two Google Ads accounts, but UTM tags had not been set at the campaign level.

The remarketing tag in GTM also required a separate review. For businesses, this means one simple thing: even when an ad campaign is already running, the management picture may be incomplete if not all analytics data has been collected accurately.

We didn’t limit ourselves to the dashboard and also tested the website itself: the relevance of the final URLs, loading speed, mobile usability, cross-browser compatibility, the presence of a form, the functionality of contact buttons, and basic UX scenarios. This is one of the key points for JobStudio: we don’t evaluate “settings for the sake of settings,” but rather the actual path to an application. If a user has already clicked but then can’t proceed normally or ends up in the wrong context—the advertising budget starts to drain away immediately after the click.

The audit revealed that the account structure was incomplete. It lacked a separate brand campaign targeting brand-related queries, a campaign targeting competitors, a breakdown between hot and cold queries, campaigns for general queries, PPC, search and display remarketing, and video campaigns. Additionally, there was no comprehensive breakdown by location, which meant that some countries were not receiving impressions. In one of the campaigns, Search and Display were mixed, even though these are different mechanisms with different operating logics. For a systematic performance-based approach, these are no longer minor flaws but fundamental shortcomings.

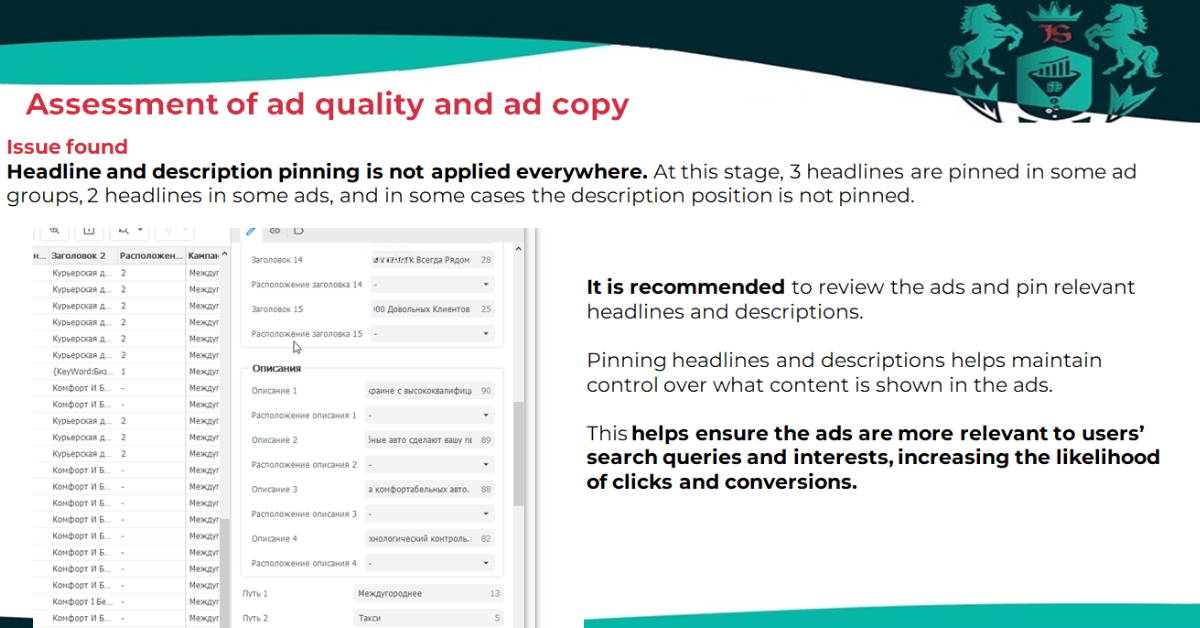

Some groups had only one responsive ad, which meant the system had almost no room for proper A/B testing. Headlines and descriptions weren’t set for all ads. Some ads lacked a sufficient number of headlines and descriptions, even though Google Ads recommends using as many options as possible.

Additionally, various types of elements—such as additional links, locations, prices, phone numbers, and promotions—were not consistently implemented. Some of these elements appeared in individual ads, but not across the entire account. As a result, the ads were underperforming even before users clicked through to the website.

Some of the keywords in the project had a “low-volume” status, meaning they were essentially not appearing in search results. Additionally, during the analysis of search queries, irrelevant phrases such as “tickets,” “minibus,” “bus station,” and “DHL” were identified. This was a clear signal that the negative keyword lists needed refinement, and the core needed to be cleaned up and reorganized. In the transportation sector, this is particularly critical: a user searching for tickets or a minibus and a user searching for a transfer service have different intents and different commercial value.

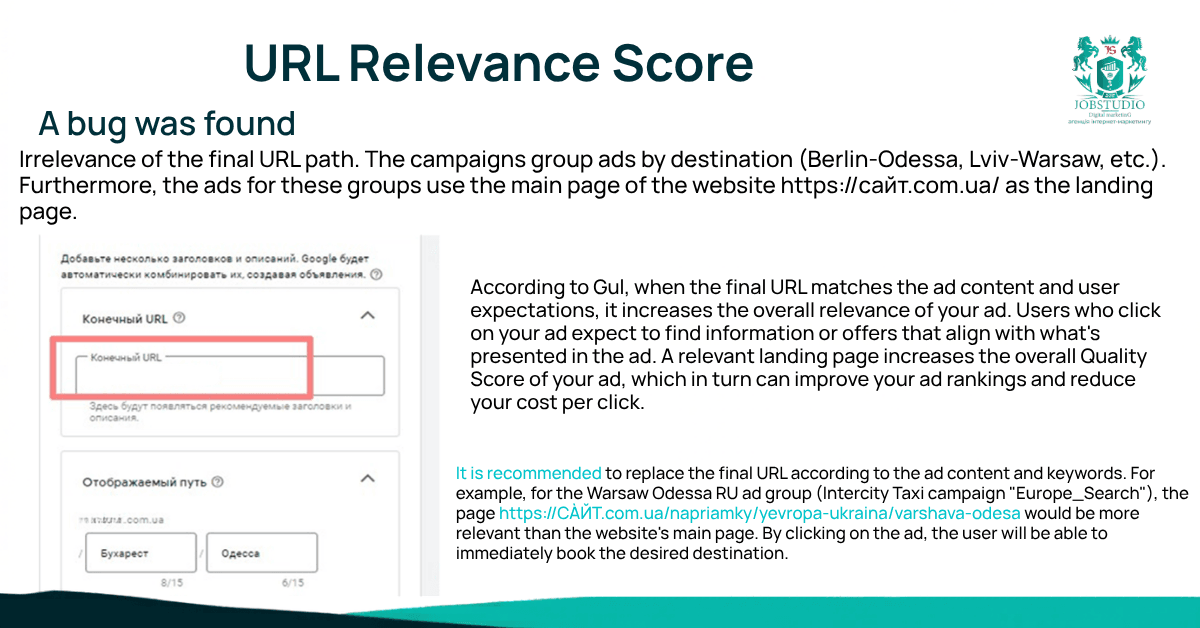

One of the most telling findings of the audit concerned landing pages. The campaigns already followed a logic of grouping by destination, but for some of these groups—such as landing pages—the website’s homepage was being used. The audit directly provided an example: for a group such as “Warsaw — Odessa,” the page for that specific route should have been more relevant than the homepage. For the user, this means having to search further for the information they need. For the ad, it means lower relevance, a weaker post-click experience, and a higher risk of losing conversions.

Formally, the necessary events had been set up in Analytics, but testing revealed that part of the logic wasn’t working in practice. The submission form hadn’t been implemented, even though it existed in the website’s code. Some of the messenger buttons lacked links, which meant the goals physically didn’t trigger. UTM tags weren’t set at the campaign level. In other words, the business could only see part of the real picture. And when data is incomplete, automated strategies, budget decisions, and conclusions about effectiveness also risk being inaccurate.

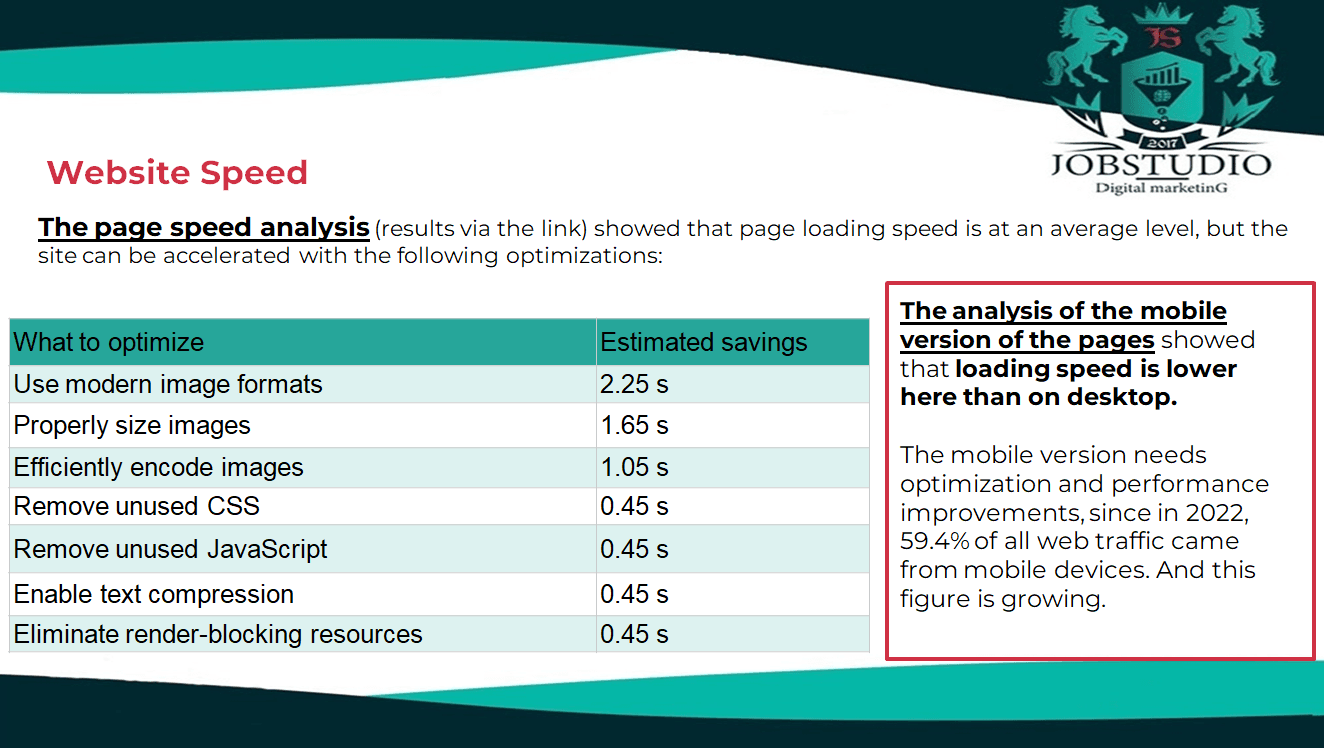

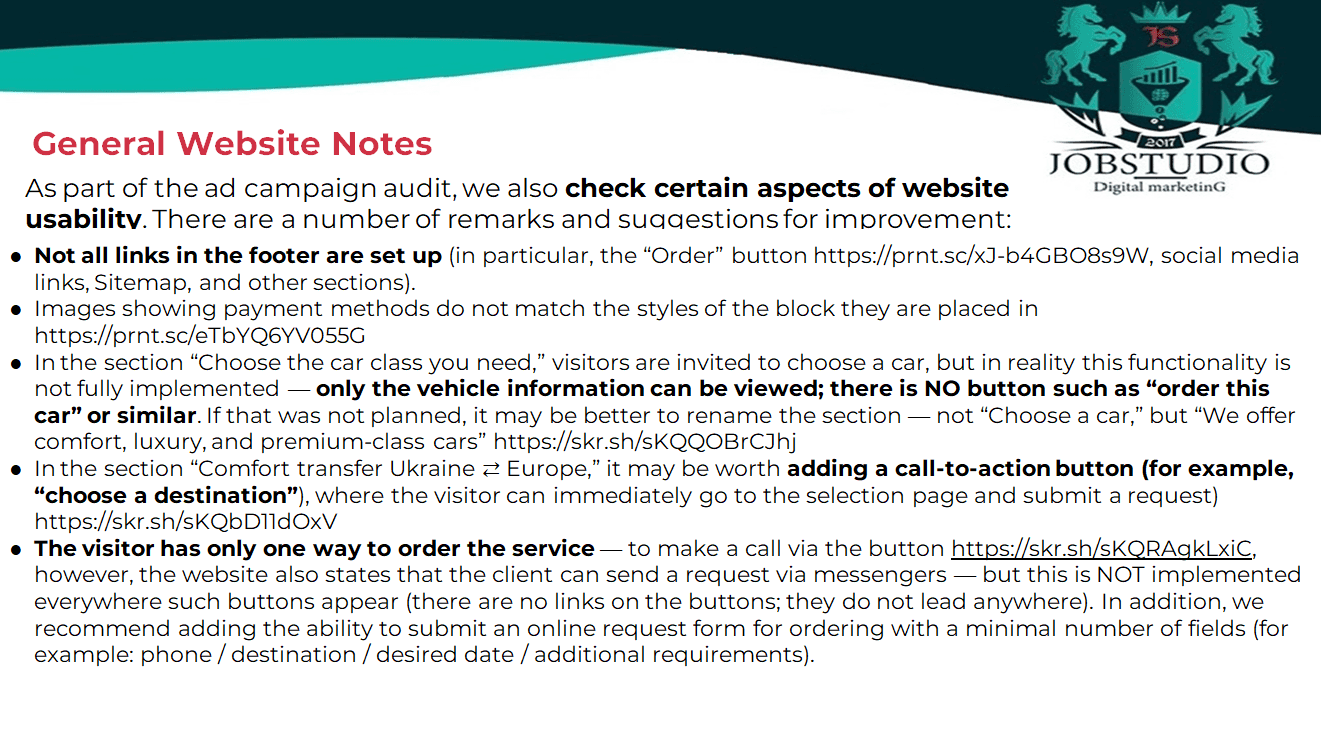

The website also turned out to be not just a neutral background, but a specific source of losses. Analysis showed that the mobile version of the pages loaded more slowly than the desktop version. A Google usability check returned the rating “page is not mobile-friendly” because not all resources were loading. On some tablet resolutions, the footer was not displayed. Additionally, the audit found that not all links in the footer were active, and the “Select a car of the class you need” section did not lead the user to the desired action: a user could only view the information but had no clear button to order a car. This is precisely the case where advertising brings in an interested user, but the website does not help them quickly proceed to making a request.

When an account lacks separate campaigns for brand, competitive, media, remarketing, and video scenarios, the business is physically unable to capture a portion of the demand it could otherwise capture. In other words, the problem wasn’t just in optimizing existing campaigns, but also in the fact that the system for covering demand was incomplete. In such a situation, the account can function, but it starts out with limited potential.

Due to weaknesses in semantics, “low-query” statuses, and insufficiently strict downvoting, the account attracted some noise traffic. Separately, the audit noted that the majority of the budget was allocated to Ukraine, with a smaller portion going to Europe, even though some countries were not fully utilized due to the lack of proper geographic targeting. Such imbalances are not always visible in a single report, but over time, they reduce the system’s profitability.

Even strong commercial demand won’t help if, after clicking, the user lands on the wrong page or can’t interact with it properly. In this case, the issues were very specific: irrelevant URLs, inactive messenger buttons, a non-functional form, a flawed car selection process, and mobile UX problems. For the business, this means one simple thing: the click has already been paid for, but part of the chance of a lead is lost right on the website.

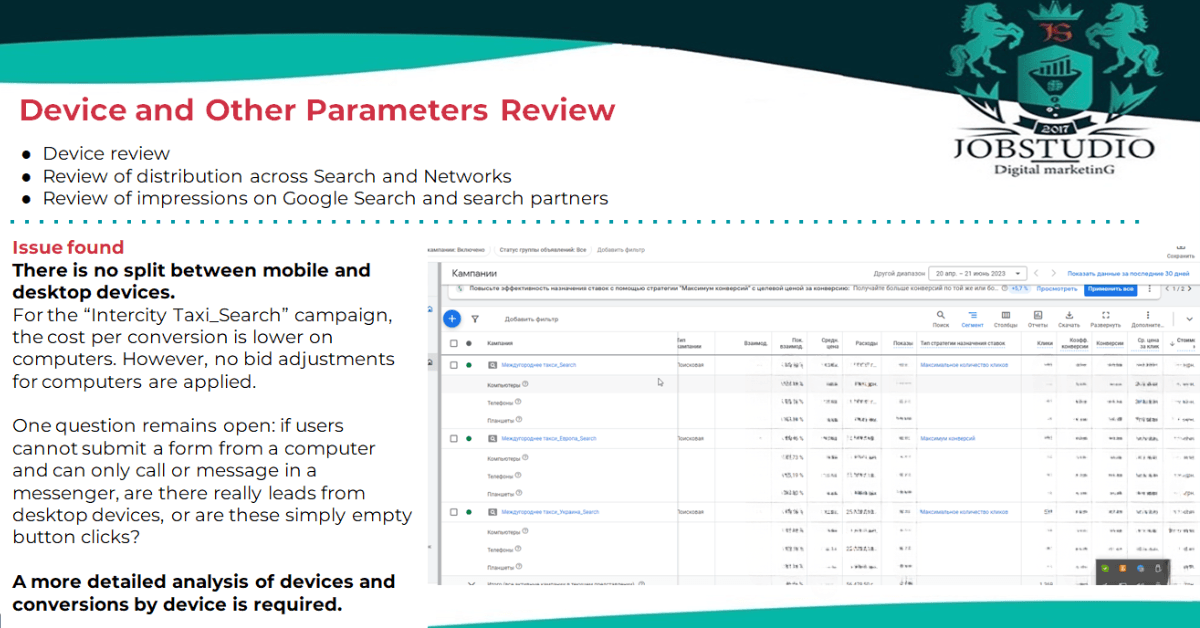

The audit also revealed differences in performance between individual campaigns and queries. The “Intercity Taxi_Search” campaign had the highest target cost—472.85 UAH, excluding VAT. At the same time, “Intercity Taxi_Ukraine_Search” had the lowest cost per conversion and appeared to be a candidate for scaling. Among the queries, the best conversion rates were for phrases such as “[taxi kyiv warsaw]”—32.68, “[taxi warsaw kyiv]”—25.77, and “[taxi chisinau odessa]”—19.25. But there were also some clearly expensive keywords: for the query “Bucharest Kyiv,” the cost per conversion was 2,320.57 UAH, and for “[taxis Odessa Chisinau]” — 916.35 UAH. The audit also noted that a conversion does not necessarily equate to an order. In other words, simply increasing the budget here would have been premature: first, it was necessary to figure out exactly what needed to be scaled up and what needed to be restructured.

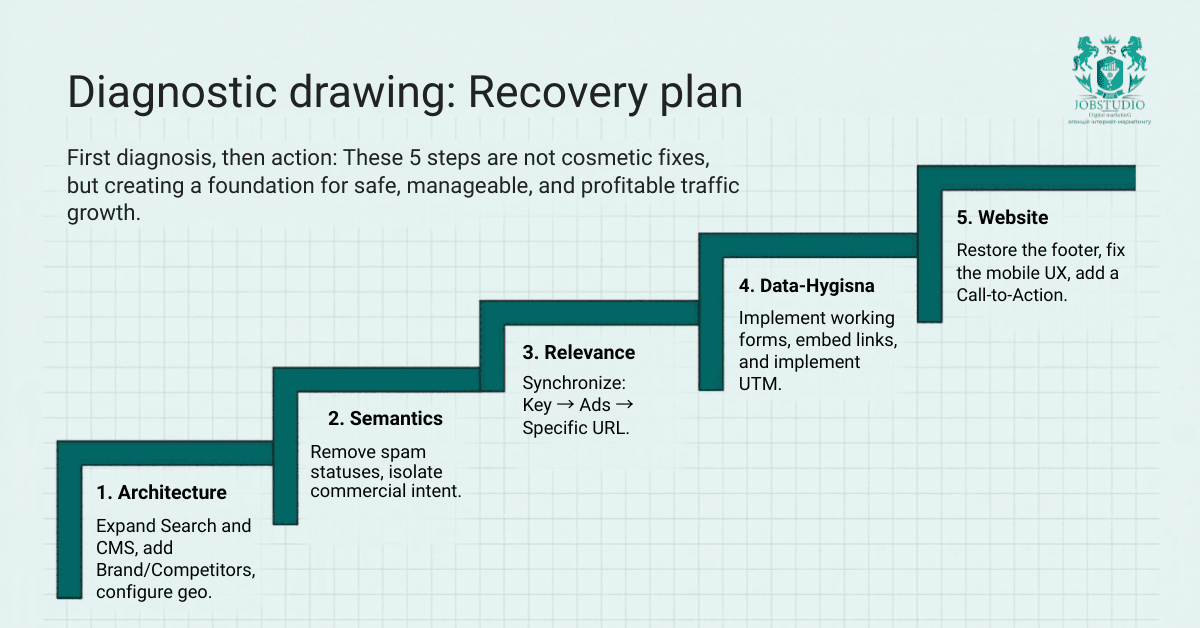

The first step is to finalize the account structure. Add any missing campaigns for brand queries, competitors, general clusters, KMS, remarketing, and video. Separate Search and Display campaigns, and organize them by geography to avoid losing impressions in certain countries. This approach allows you not only to “clear up the chaos” but also to regain control: to understand which segment is performing well, which ones are worth testing, and which ones should be scaled up.

The second step is to refine the semantics. Check the frequency of queries marked as “low volume,” find more viable alternatives, remove weak or irrelevant clusters, and continue expanding the negative keyword lists. In this niche, it’s important not just to collect a lot of keywords, but to weed out those that lead not to conversions but to accidental traffic. That’s why the audit specifically recommended continuing to work on non-targeted keywords and revising the core to align with actual commercial demand.

The third area is to refine the ads. Add additional responsive ads to the ad groups for A/B testing, increase the number of headlines and descriptions, verify and properly anchor key elements, and fully optimize the ad extensions. Separately—align the “keyword → ad → landing page” logic so that the user sees exactly the message they expect and lands exactly where they need to. For performance advertising, this isn’t just cosmetic; it directly impacts relevance and the likelihood of a conversion.

The next section was analytics. Here, the recommendations were very specific: set UTM tags at the campaign level, verify that all goals were being tracked, review the logic behind the messenger buttons separately, refine the application form, and ensure that all key user scenarios were actually being tracked. This was particularly important for the project because some of the goals existed “on paper” but didn’t work in real-world scenarios. And without clean analytics, performance solutions become weaker right at the management level.

A separate set of recommendations focused on the website. We needed to fix broken links in the footer, implement a functional form, add links to all contact buttons, review the user flow on the destination pages, resolve mobile issues, and refine sections where users lacked a clear call to action. Such fixes often seem “less flashy” than relaunching campaigns, but they are precisely what eat into the budget after the click—where businesses least want to lose money.

The main value of such an audit is that it doesn’t just give you a vague sense that “something isn’t quite right,” but rather provides a clear map of the system’s weaknesses. After the audit, it became clear exactly where the system was losing effectiveness: in the account structure, in the semantics, in irrelevant URLs, in the goals, in the mobile UX, and in the interaction scenarios themselves. This is the right starting point for any further optimization: diagnosis first, then action.

The second outcome was that the audit helped distinguish critical issues from secondary ones. Not all problems are equally damaging to the business. Some relate to technical usability, while others involve direct financial losses. In this case, the top priorities were account structure, semantics, landing page relevance, conversion accuracy, and the functionality of key touchpoints on the site. It is precisely this prioritization that allows us to avoid spreading our resources too thin and instead operate like a boutique performance agency: deeply, precisely, and without unnecessary bureaucracy.

The third outcome is that the audit laid the groundwork for future growth—not abstract growth, but strategic growth. It revealed which campaigns to prioritize, which search terms to group into separate campaigns, where to increase bids and implement manual management, and where, conversely, to first eliminate waste. This is especially important for businesses that are already advertising and want to grow further: without such a foundation, scaling becomes a guessing game rather than data-driven work.

When your ads are running but you don’t feel in control. When you’re getting clicks and even some conversions, but it’s unclear exactly where the effectiveness is falling short. When a business is preparing to scale its budget and wants to address the weak spots first. And especially—when there are concerns not just about the ads, but about the entire ecosystem: semantics, analytics, the website, the mobile experience, and touchpoints. This audit revealed exactly that kind of systemic picture.

You can identify some of the issues, but not all of them. In this particular case, the website revealed that some of the losses occurred after the click: inactive buttons, an incomplete form, irrelevant landing pages, and a poor mobile experience. In other words, even properly configured ads won’t deliver maximum results if the landing page doesn’t drive the user to take action. For a performance-driven approach, checking only the account dashboard is too narrow.

Because they are what determine the quality of traffic and the conversion potential after a click. If ads are shown for queries with low commercial value or direct users to the wrong page, the system begins to lose effectiveness throughout the entire chain. This audit identified both off-target search queries and examples of irrelevant URLs for route groups. This is a classic scenario where the problem lies not in the bid, but in the logic of the targeting.

An audit provides the most important thing—clarity. It reveals where the system is underperforming, what needs to be fixed first, which areas are actually draining the budget, and which are merely creating technical noise. For businesses, this means fewer decisions based on gut feelings and more actions grounded in facts. That’s exactly how we work at JobStudio: first we diagnose, then we build a plan, and only then do we scale.

In most cases, no. At least if you already have the feeling that the ads “seem to be working, but not as well as they could.” In this case, the audit clearly showed that the system was suffering from structural, semantic, analytical, and site-related issues all at once. In such a situation, increasing the budget would only exacerbate some of the problems. First, you need to plug the leaks; then, scale up the strong areas.

The main takeaway from this case study is simple: the problem wasn’t a single setting or a single ad. The audit revealed a chain of losses. The account structure, semantics, ads, analytics, and website weren’t functioning as a unified system. That’s why effectiveness was being lost not at a single point, but throughout the entire user journey.

If you’re already running ads but don’t understand why you aren’t getting more leads, the problem might not be where you’re looking. It’s not just about bid prices. It’s not just about creative assets. It’s not just about keywords. Very often, losses are spread across several levels of the system. And that’s exactly why a thorough audit gives a business more than just a “list of observations”: it restores control over the situation.

Because it allows you to identify hidden leaks before you invest even more money into the system. For us at JobStudio, this is the standard approach of a boutique performance agency: we don’t sell empty promises or use vague generalizations, but instead break down the problem into facts, identify growth opportunities, and build solutions tailored to specific business logic—not just “market averages.” That’s exactly how advertising stops being a collection of campaigns and starts working as a managed growth tool.